Most "what is agentic AI" posts spend 2,000 words avoiding the actual definition. Here it is in one line: an AI system that takes actions, observes the result, and revises its plan in a loop until the goal is met or the system gives up. Everything else in this post is detail under that one line.

We ship agentic systems for clients — outbound prospecting, content production, ops automation. The framing below is what we mean by "agentic" when we put one into production, not what the term means in a research paper.

You can think of a standard chatbot as a function: prompt in, text out. An agentic system is a controller: goal in, sequence of tool calls and decisions out, observable side effects in real systems.

How agentic AI is different from a chatbot

A chatbot answers your question. An agent answers your question by doing work — checking your calendar, drafting a reply, updating a CRM record, kicking off a workflow.

The two key ingredients are:

- A loop. The model can call tools, see what happened, and decide what to do next.

- Tools. The model has access to real APIs (calendars, CRMs, databases, code execution).

Without the loop, you have a chatbot with plugins. Without tools, you have a long monologue. Agentic AI requires both.

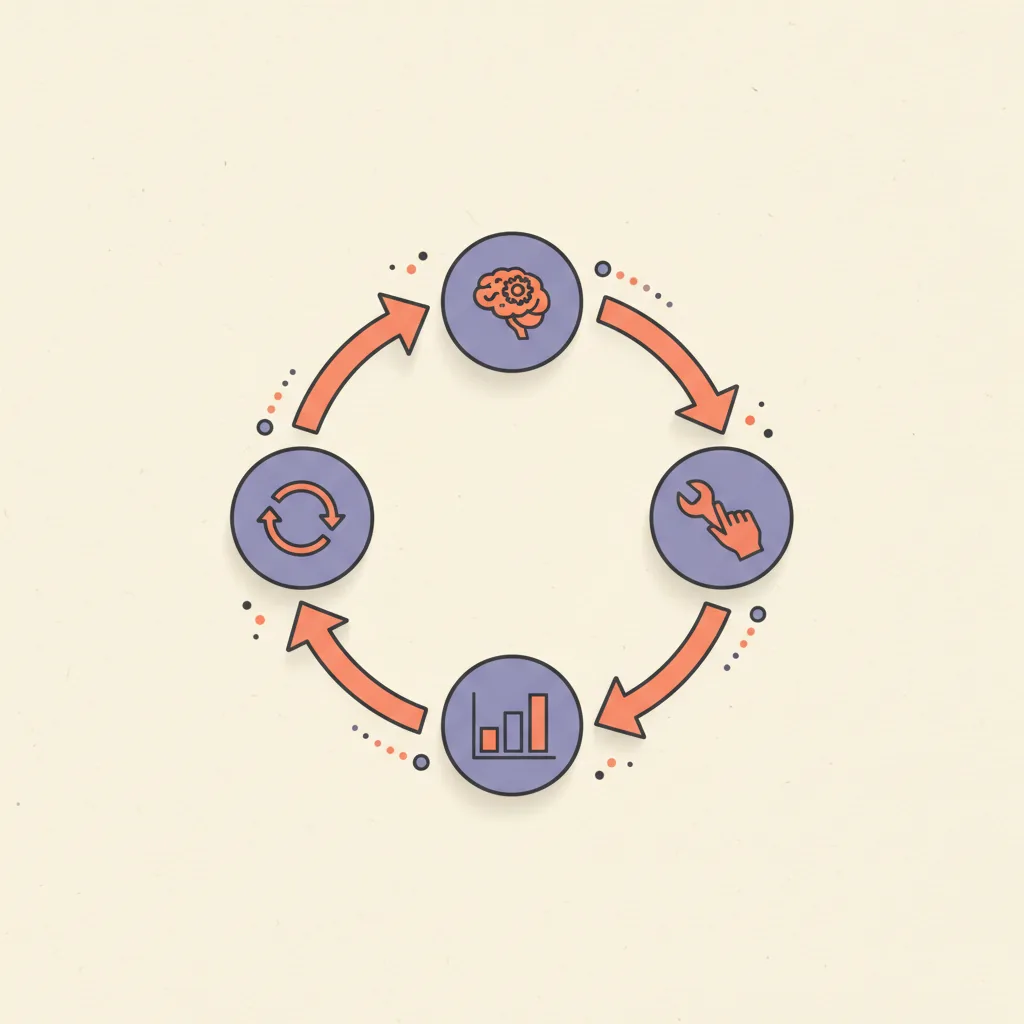

A typical agent loop

A production agent loop runs five stages — receive a goal, plan the next step, call a tool, observe the result, decide whether to stop — and most ship within 3–8 cycles before completion. Anything longer usually means the goal was poorly defined or the agent is stuck retrying the same failed tool. The shape is the same whether you build it on Claude Agents, LangGraph, OpenAI Assistants, or a hand-rolled loop in Python.

- Receive a goal (e.g. "book a discovery call with this lead next week").

- Plan the next step ("check the lead's timezone, then check our calendar availability, then send a Calendly link by email").

- Call a tool (e.g. lookup_lead, get_calendar_availability, send_email).

- Observe the result (success, failure, unexpected data).

- Decide whether the goal is met. If yes, stop. If no, plan the next step.

Most production agents run for 3–8 steps before stopping. Anything longer usually means the goal was poorly defined or the agent is stuck in a retry loop.

Where agentic AI works in business

Agentic systems shine in narrow, high-volume operational tasks where the work is repetitive but each instance has small variations. Examples we ship for clients:

- SDR outbound — research a lead, personalize a message, schedule a follow-up sequence.

- Support triage — categorize an inbound ticket, attempt deflection with a knowledge base, escalate if confidence is low.

- Inbox management — read incoming email, draft replies, attach context from CRM, queue for human review.

- Internal ops — generate weekly reports by querying multiple systems, summarize, post to Slack.

- Lead routing — score, enrich, assign to the right human or sequence based on real-time data.

Where they fail: open-ended creative work, anything requiring real judgment about people, anything where the cost of a wrong action is high relative to the value of a right action.

What makes an agent reliable in production

Reliable production agents share five structural properties: narrow scope (one agent, one job — never a "general business assistant"), whitelisted tools (the agent only sees what it actually needs), per-step evaluation (every tool call gets scored, with hard-stops on confidence drops), human approval on irreversible actions (external emails, billing, code deploys), and full observability (every input, output, and reasoning step logged). Agents that skip any one of these fail in production within the first month — usually with a quiet category of error nobody notices until a customer complains.

- Narrow scope. One agent, one job. Don't build a "general business assistant".

- Constrained tools. Whitelist exactly what it can do. Don't give it shell access "in case it's useful".

- Per-step evaluation. Score each tool call. Hard-stop on confidence drops.

- Human-in-the-loop on anything irreversible. Sending external emails, billing actions, code deploys all need approval.

- Observability. Log every tool call with inputs, outputs, and the reasoning trace. Without this, debugging is impossible.

Common architectures

| Pattern | How it works | Pros | Cons | When to use |

|---|---|---|---|---|

| ReAct (Reason + Act) | Model alternates reasoning steps with tool calls | Simple · transparent · easy to debug | One agent, no parallelism | Default for most production cases |

| Multi-agent systems | Specialized agents (planner / executor / reviewer) coordinate via scratchpad | More capable on complex goals | Hard to debug · expensive · failure modes multiply | Only when single-agent ReAct is provably insufficient |

| Workflow-bound agents | Deterministic workflow (n8n / Make / LangGraph) calls the LLM at decision points | Reliable · observable · cheap | Less flexible than open-loop agents | 80% of production work we ship |

Agentic AI vs autonomous AI

These terms get used interchangeably but they are not the same. Autonomous implies no human supervision over long horizons. Agentic just implies tool use and a loop.

In practice, every production "agent" we have shipped has a human in the loop somewhere — even if it's only a daily review of what the agent did. Calling something autonomous in 2026 is mostly marketing.

What changed in 2024–2026 that made this work

Three changes between 2024 and 2026 made agentic AI genuinely production-viable instead of demo-grade. First, tool-calling reliability hit a usable bar with Claude 3.5 Sonnet in mid-2024 and held through the Claude 4.x and GPT-4/5 generations — agents stopped hallucinating tool names and parameter shapes. Second, frameworks (LangGraph, CrewAI, OpenAI Assistants, Claude Agents) standardized the loop pattern so engineers stopped re-inventing the controller. Third, per-call costs dropped enough that running an 8-step loop on routine ops work became affordable rather than a research-budget item.

- Models got reliable enough at tool calling. Claude 3.5 Sonnet (mid-2024) was the first model where tool use felt production-grade. Claude 4.x and GPT-4 / GPT-5 generations extended this.

- Frameworks matured. LangGraph, CrewAI, OpenAI Assistants, Claude Agents all standardized the loop pattern.

- Cost dropped enough that running an 8-step loop at scale became affordable for routine ops work.

How to evaluate whether you need agentic AI

You need agentic AI when three conditions all hold: the work is repetitive but with small variations (so a deterministic workflow underfits), wrong actions can be caught and reversed cheaply (so a mistake costs minutes not contracts), and the value per task exceeds the per-run cost (most LLM ops cost $0.01–$0.30 — a low bar to clear for anything sales-adjacent or ops-adjacent). Miss any one condition and you want a deterministic automation, an outsourced human, or no system at all — not an agent.

- Is the work repetitive but with small variations? (Yes → agent makes sense. No → workflow or human.)

- Can a wrong action be caught and reversed cheaply? (Yes → ship it. No → human-in-the-loop.)

- Is the value per task higher than the cost per agent run? (Most LLM ops cost $0.01–$0.30 per task — easy bar to clear for anything sales-adjacent.)

If you answered yes to all three, build the agent. If not, you probably want a deterministic automation, not an agent. We cover the broader pattern landscape in our guide on what AI automation actually is and the 5 patterns that run in production — agent workflows are one of those five.