A SaaS client came in last quarter wanting "a programmatic SEO program — three thousand comparison pages, live next month". They had spec'd the templates, picked the keyword pattern, and lined up an AI writing pipeline to generate the body content. The bill for the writing alone was tracking toward $4,800 in API spend. We audited the plan and shipped a different version: 240 pages, real per-row data sourced from public APIs and a manually curated spreadsheet, AI used only for enrichment and rewriting on edit, rolling refresh every 90 days. Six months later that 240-page footprint is doing 38,000 organic sessions a month. The original three-thousand-page plan would almost certainly have been deindexed by Google's helpful-content classifier inside a quarter.

That is the actual shape of programmatic SEO in 2026. The leverage is real, the patterns that work are well documented, and the failure mode is almost always the same: shipping a template before the database underneath it is real. This post is the concept piece — what pSEO is, what it is not, the four pieces that have to be in place, the page patterns that still rank, and the failure modes that turn the program into thin content the moment Google notices. The how-to lives next door in our how to build an AI content engine guide, which covers the broader content-operations stack this sits inside.

What programmatic SEO actually is

Strip the marketing framing and pSEO is a templated content system: one HTML/CSS/JS template, one structured data source, and a build step that joins them into many pages. Each page lives at its own URL, targets one long-tail query, and is hydrated with row-specific data so it actually answers the query. The output is a directory, a comparison index, a city-page program, a template-gallery — anything where the same shape of page repeats across many specific data points.

The core mental model: programmatic SEO is database publishing, not writing. The pages are good because the data behind them is good, not because a model wrote pleasant prose to fill them. Operators who confuse the two ship templates over thin or fabricated data and get punished for it. Operators who get the database right ship fewer pages and rank.

| Approach | What it is | Where it fits |

|---|---|---|

| Programmatic SEO | Template + structured database → many pages, each with real per-row data | Long-tail keyword patterns with predictable structure and real underlying data |

| Mass AI generation | Prompt-driven generation of many free-form articles with no underlying database | Almost nothing in 2026 — Google's helpful-content systems cull this on sight |

| Content velocity | Hand-written or AI-assisted blog posts shipped at a faster cadence | Editorial topics and thought leadership where each piece is meaningfully unique |

| Headless commerce / catalog SEO | Product pages generated from a product database | Adjacent — same plumbing, different intent (commercial vs informational) |

| Local landing pages at scale | A subset of pSEO using "[service] in [city]" patterns and per-city data | Multi-location service businesses, where the per-city data is genuinely different |

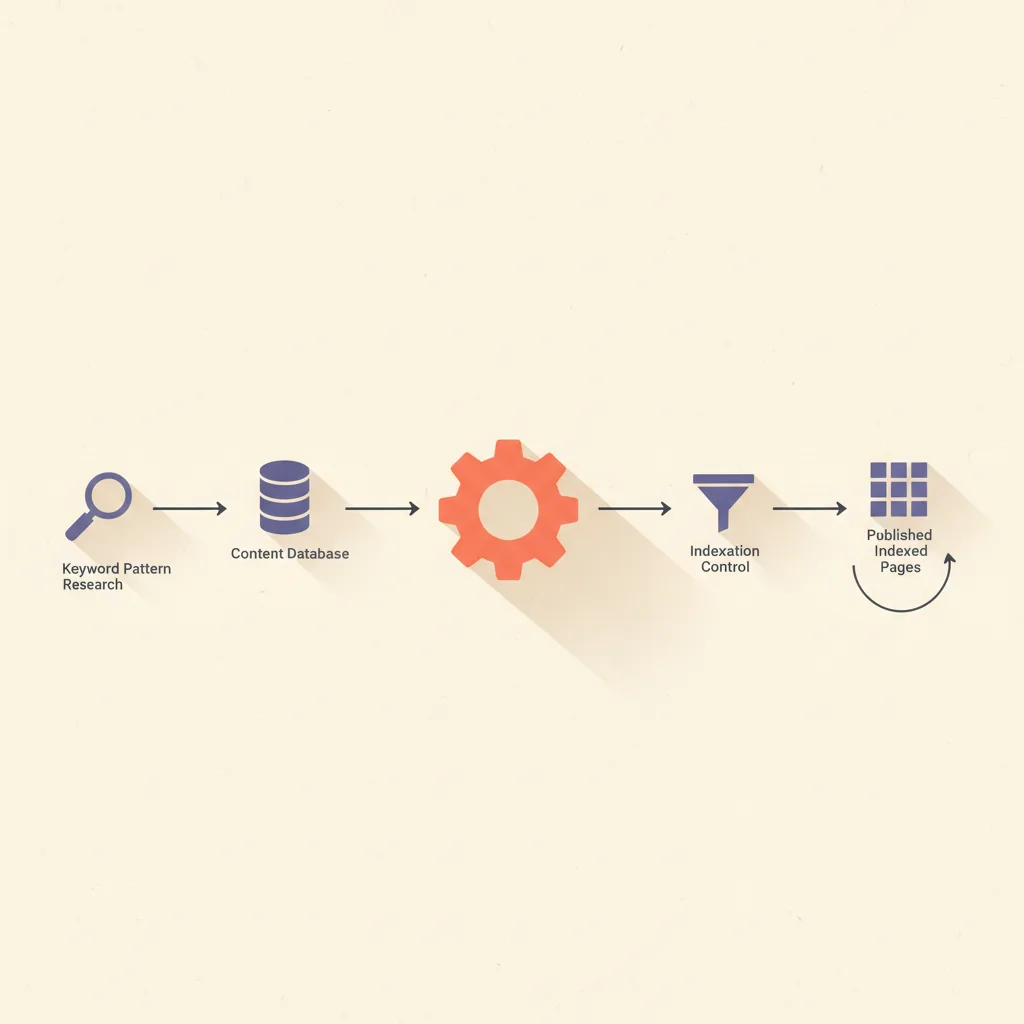

The four pieces that have to be in place

Every pSEO program that holds up in 2026 has the same four moving parts. Skipping any one of them is the single most common reason these programs fail at the 60-to-90-day mark when Google's rolling quality systems get around to evaluating them.

- A keyword pattern with proven long-tail demand. Patterns like "[product] for [use case]", "best [tool] for [audience]", "[city] + [service]", "[X] vs [Y] comparison". The pattern needs hundreds or thousands of plausible variants, and at least a meaningful subset of those variants needs measurable monthly search volume. If only the head term has volume and the tail is hollow, pSEO is the wrong tool.

- A content database with real, page-specific data. For an integration-pages program, that means actual integration documentation per app pair. For a comparison-pages program, real feature/pricing data per tool. For a city-pages program, real local data — addresses, ZIP codes, service areas, photos. The database is the asset; the template is plumbing.

- A template that turns each row into a page that genuinely answers the query. Above-the-fold content has to be the specific answer, not a generic template paragraph with the row's name swapped in. Schema markup matches the page type. Internal links route to closely-related rows so the cluster reads as a network, not a flat list.

- Indexation control and an internal linking model. Not every generated page deserves indexation. The thin-tail rows (low data confidence, no demand signal) should be noindex. The strong-tail rows should be in the sitemap, linked from a hub page, and surfaced in search-engine-friendly navigation. Without this, the long tail eats the head's crawl budget and dilutes site quality signals.

The order matters. Most failed programs invert it: ship the template first, plan the database second, and never get around to indexation strategy. The order that works is database → template → keyword validation → indexation. The template is the last thing to lock down, not the first.

Page patterns that still rank in 2026

Not every pattern works. The ones that hold up consistently share two qualities: predictable user intent at the long-tail level, and genuinely different per-row data. The patterns below are the ones we ship for clients, ranked by how often the underlying database is actually defensible vs how often it ends up being thin.

| Pattern | Example query | Data requirement | Difficulty |

|---|---|---|---|

| Integration pages | "slack zapier integration" | Real integration documentation per app pair | Medium — needs API docs or product spec per row |

| Comparison pages | "airtable vs notion" | Genuine feature, pricing, and use-case data per tool | Medium — needs maintained competitor data |

| Local landing pages | "plumber in austin tx" | Real local presence — addresses, service areas, photos | Hard — most "local pSEO" is fabricated and gets caught |

| Template / asset galleries | "airbnb listing template excel" | Real downloadable templates per row | Medium — needs actual asset production per row |

| Best-of / curation pages | "best ai tools for sdrs" | Curated list with genuine evaluation per item | Hard — needs editorial judgment, not just a database |

| Glossary / definition pages | "what is [term]" | Domain expertise per term, not just a Wikipedia rewrite | Easy on volume, hard on actually-useful versions |

| Calculator / tool pages | "mortgage calculator" + variants | Functional calculator per row, not just a description | Hard — engineering, not content |

| Things-to-do / city directory | "things to do in [city]" | Real local data, photos, opening hours, reviews | Hard — TripAdvisor and Yelp own this; new entrants need a wedge |

The pattern that has degraded the most since 2023: free-form blog-style "best of" pages with no underlying evaluation, just AI-rephrased product marketing. Google has gotten very good at spotting these. The version that still works is the same shape with real evaluation behind each pick — hands-on testing notes, screenshots, pricing pulled from primary sources, dated last-checked timestamps. The difference is editorial discipline, not template design.

How AI fits in the 2026 stack (and where it doesn't)

AI changes pSEO in a narrower way than the marketing copy suggests. The wrong use is what most teams reach for first: prompt the model to write three thousand articles, ship them, hope. That has the worst ROI of any content tactic in 2026 and we audit teams every month who burned $5k–$30k learning it the hard way. The right use is everywhere else in the pipeline.

- Data enrichment, not data invention. Use the model to summarize a long source document into a structured row, classify a tool by category, normalize pricing tiers across vendor pages — anything where a real source exists and the model is compressing it. Do not use the model to invent specifications, prices, or features. The asymmetry: the cost of a fabricated price is permanent reputational damage and the cost of a missed enrichment opportunity is one row of slightly worse data.

- Per-row content blocks that depend on the data. Once the row has real fields, AI is reasonable for generating the prose around those fields — a one-paragraph explainer for an integration page that draws on the integration's real capabilities, an FAQ block grounded in the row's real spec sheet. The constraint is that every claim has to trace back to a structured field, not to the model's training data.

- Edit-time rewriting and refresh. When a row's data updates (a competitor changes pricing, an integration adds a new feature), the AI rewrites the affected sections rather than the whole page. Rolling refresh on a 60-to-90-day cadence keeps the program from going stale.

- Internal-linking suggestions. Embedding-based similarity over the row database surfaces "rows that should link to this row" in a way hand-curated linking cannot at scale. This is one of the highest-leverage uses of AI in pSEO and almost nobody implements it.

- Search-intent validation against the SERP. Before generating a page, fetch the live SERP for the target query and have the model check whether the planned page shape matches what is currently ranking. If the SERP is dominated by transactional pages and we planned an informational one, kill the row before generating it. What is an AI agent covers the underlying agent architecture this validation loop sits on.

The summary that fits on an index card: AI is a data pipeline in modern pSEO, not a writing engine. The agent normalizes, enriches, validates intent, and refreshes — it does not invent the row.

The shape above is consistent across the pSEO programs we audit. The 240-page deep-data version generates roughly 38,000 organic sessions a month at scale; the 3,000-page AI-only version generates roughly 9,000, and that traffic is fragile to the next quality update. The bottleneck is not page count. The bottleneck is how much real data the team can actually maintain, and the right page count is the largest one where every row clears the data bar.

The economics flip the moment you measure cost per delivered session instead of cost per page. AI-only mass generation is the cheapest input cost and the most expensive output cost; the $4,800 API bill in our opener was tracking toward roughly $92 per thousand sessions, against $3.80 for the 240-page version we shipped instead. Operators who instinctively reach for "more pages, cheaper per page" are optimizing the wrong unit.

A practical build, end to end

What an operator-grade pSEO build looks like in our shop in late April 2026. The stack is opinionated; substitutions are fine but the shape stays the same.

- Keyword + pattern validation. Pull the candidate pattern through DataForSEO or Ahrefs to confirm tail volume. Manually inspect the SERP for ten randomly-selected variants in the pattern to confirm the page shape that ranks (informational vs commercial vs hybrid). If fewer than seven of the ten variants have a clear matching page shape, kill the pattern.

- Database design. Pick a structured store — Sanity, Supabase, or Airtable, in roughly that order of preference for content-heavy programs. Define the schema before the template. Every field that appears on the page exists as a column; nothing on the page is hard-coded into the template if it varies per row.

- Data sourcing. For each row, document where the row's data comes from — a public API, a manually curated entry, a vendor product page parsed at a specific date. Rows without a documented source get a "data confidence" flag and are excluded from the indexed sitemap until they have one.

- Template build. Astro or Next.js with static generation, one page component bound to the row schema. Schema.org markup matches the page type (Product, FAQPage, ItemList — whichever fits). The template is reviewed against three real rows before going live, not three fabricated ones.

- AI enrichment layer. A pipeline (n8n, a Cloudflare Worker, or a Python job) reads each row, calls Claude or another model with a tightly scoped prompt that has access only to the row's real fields and any external sources you have explicitly authorized, and writes the generated prose blocks back to the database. Outputs are reviewed before publish on the first build; on rolling refreshes, only diff edits are reviewed.

- Indexation strategy. Sitemap includes only rows above the data-confidence threshold. Hub page links to all indexed rows. Robots policy noindexes the long tail until those rows graduate. Internal-link suggestions populated from embedding similarity across the row database.

- Rolling refresh. Every 60–90 days, the enrichment layer re-checks each row's sources, flags rows where the source data has materially changed, and triggers a rewrite of the affected sections. The agent does not republish unchanged rows.

Our Content Marketing Operations offering ships this exact stack as the production-ready version, and the workflow plumbing under it gets its own deeper coverage in what is n8n. For the question of n8n vs Zapier specifically as the orchestration layer for the enrichment pipeline, n8n vs Zapier has the comparison.

Common failure modes

Patterns we see repeatedly when auditing pSEO programs that broke at the 60-to-180-day mark:

- Template before database. The team ships a beautiful template with real data on three demo rows, then realizes the remaining 2,997 rows do not have the data the template needs. They fill the gap with model-generated prose. Google notices within a quarter.

- Keyword validation skipped. The pattern was assumed to have tail demand based on the head term's volume. Most of the tail variants have effectively zero monthly searches, the team ships pages nobody is looking for, and the program looks like spam to ranking systems even though intent was good.

- No indexation strategy. Every generated page is in the sitemap, including the ones with thin or missing data. Google's crawl budget is spent on the worst pages first; the strong rows take longer to index than they should and the program's site-quality signal averages down to the worst rows.

- Rolling refresh skipped. The program ships and is never updated. Pricing rows go stale at 60 days, feature comparisons go stale at 90 days, and by 180 days half the database is wrong. Updates that have been refreshed by the model count as "fresh content" and the program decays toward zero.

- Treating AI prose as the database. The model generates feature lists, pricing tables, integration capabilities for each row directly, with no underlying source. Some are right by accident; the rest are fabricated. One competitor screenshot of a wrong claim circulates on a forum and the program loses Google's trust.

- No rotting-page review. Pages drop out of search results as user intent shifts (a query becomes more transactional, the SERP changes shape) and the program does not detect it. Stale rows quietly compound until somebody runs an audit and finds half the program below the data confidence threshold.

Where this is heading

Three shifts worth tracking on this surface specifically over the next 12 months:

- AI-only mass generation continues to compress. Google's helpful-content classifier gets better, the bar for "real underlying data" gets higher, and the cohort of programs that ship 5,000+ thin pages ages out of the index. The teams two quarters ahead are already shrinking footprints rather than growing them.

- Schema and structured data become non-optional. Pages that ship without schema markup matched to the page type underperform pages that do, regardless of content quality. The pSEO programs that hold up in 2026 are the ones whose templates emit Product, FAQPage, ItemList, LocalBusiness, or HowTo schema correctly per row.

- AEO (answer-engine optimization) becomes co-equal with SEO. ChatGPT, Claude, Perplexity, and the AI Overviews that now dominate large parts of the SERP all cite pages that are crawlable, well-structured, and answer the query above the fold. The pSEO programs that win in late 2026 are the ones whose pages get cited by answer engines as well as ranked by Google.

The teams two quarters ahead of the conversation in late 2026 are not the ones with the largest page counts. They are the ones whose 200-to-500-page programs are deeply data-backed, schema-marked, and refreshed on a measured cadence — earning citations from answer engines and rankings from Google as a single combined motion. We build that pattern as part of our Content Marketing Operations offering and our AI Stack Audit. The full content engine this sits inside — research, generation, distribution, analytics — is mapped end to end in our how to build an AI content engine guide.