AI UGC and real UGC get pitched as substitutes. They are not. They sit at different layers of the same creative pipeline, and the accounts that treat them as competing options burn budget on whichever side they picked. We audit ad accounts every month — the ones holding CAC flat through 2026 are running both, on purpose, with each layer doing the job the other cannot.

The framing in most comparison content is rigged. Creator-side and agency content argues AI UGC is fake and tanks performance; vendor-side content argues AI UGC matches real UGC for a tenth of the cost. Both ignore the actual operator question, which is not which one converts better in isolation but which one belongs at which layer of a real creative engine. The numbers below come from active client billing in April 2026 across DTC, app, and B2B-services accounts running 4–60 ad variants a week.

Why the substitute framing produces the wrong answer

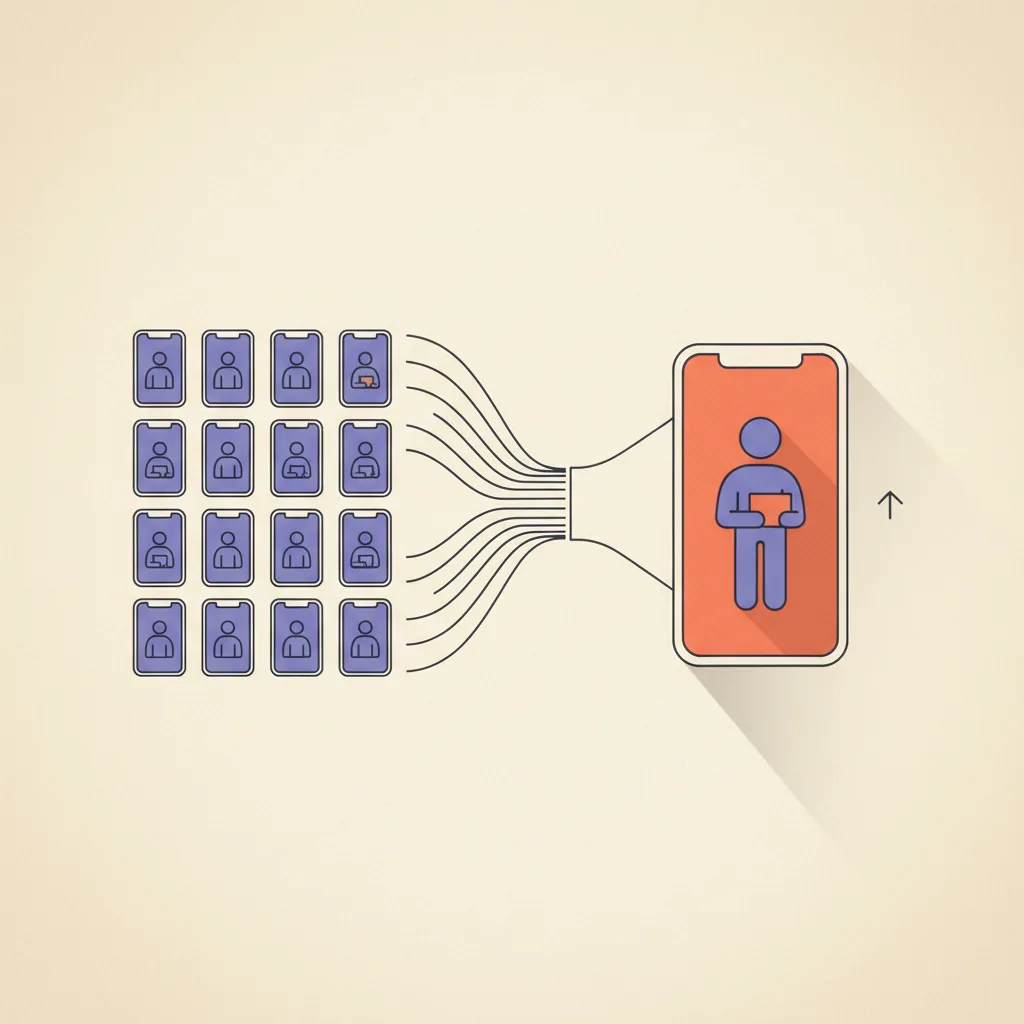

A B2C ad account doing serious creative testing has at least three distinct jobs that look like UGC from the outside. The jobs do not have the same cost, speed, or trust profile, and the same content layer does not solve all three.

- Job 1 — Angle discovery. Generate 20–50 hook reads against the same product to find which angle the algorithm actually likes. Volume is the only thing that matters. Per-variant quality barely registers.

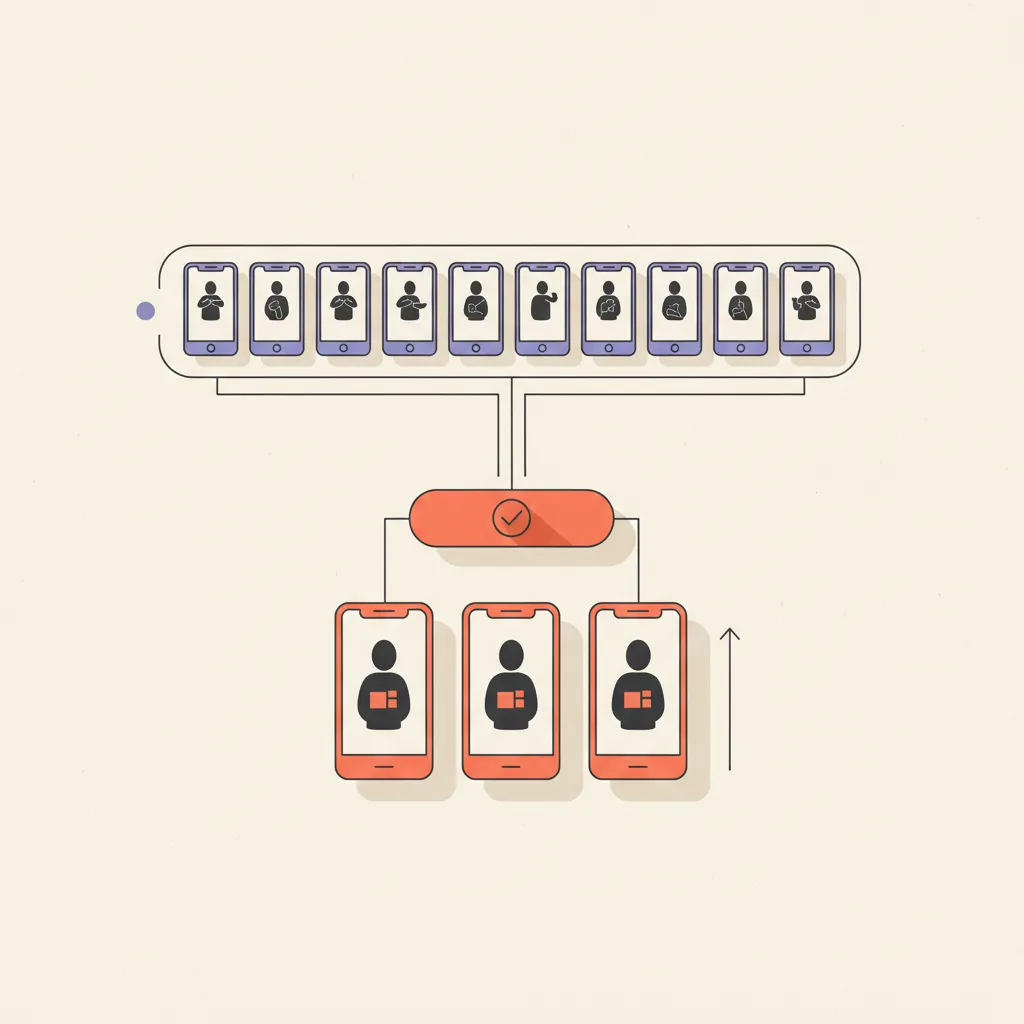

- Job 2 — Winner scaling. Take the 1–2 angles that beat the control and produce 3–6 polished versions of each that can run for 8–12 weeks without fatiguing. Per-variant authenticity matters; volume does not.

- Job 3 — Hero brand spots. The launch ad, the founder spot, the testimonial that anchors the brand. One real person, one real story, one creative that runs everywhere. Trust is the entire conversion lever.

AI UGC is structurally good at job 1 and bad at jobs 2 and 3. Real UGC is structurally good at jobs 2 and 3 and bad at job 1. Substituting one for the other in the wrong layer is what produces the failure modes you see in both camps — AI accounts whose CTR collapses in week four, real-UGC accounts that never find the angle in the first place because they could only afford to test three.

The cost spread is real, but per-video cost is the wrong unit

Headline numbers first, because the spread is genuinely 100x at the per-finished-video unit. The chart below is direct production cost only — script time, brief writing, and ad-account ops are roughly equal across both columns and excluded.

What the chart hides: the $3 AI UGC clip is a useful number only if you generate 30 of them and one finds an angle that beats the control. If you generate one and ship it, the per-finished-video price is meaningless because that one clip does no work. Conversely, the $1,200 real UGC clip is only worth it if you already know the angle. Otherwise you are buying a $1,200 lottery ticket against an angle hypothesis that may not survive the first week of testing.

The unit that decides the budget is cost per winning angle. Run the math two ways:

- Real UGC alone — 5 creators briefed at $400 each = $2,000. One angle wins, four lose. Cost per winning angle: $2,000.

- AI UGC alone — 30 variants at $3 each = $90. Three angles emerge as candidates; one becomes a real winner with sustained scale. Cost per winning angle: $90. But the winner is an AI clip, which means it tops out at week six because it cannot scale into hero rotation.

- Stacked — 30 AI variants at $3 = $90 to find the angle. Then 4 real UGC creators briefed on that angle at $400 each = $1,600. Cost per winning angle that can scale: $1,690. Lower than real-UGC-alone, with a creative that can run for 12 weeks instead of six.

Stacked is not a hedge. It is the lowest cost per winning, scaleable angle out of the three options. The whole argument for substituting one for the other rests on a unit (per-finished-video cost) that nobody actually buys media against.

Where AI UGC actually fails

Three failure modes show up consistently in audited accounts. None of them are about render quality, which is the part vendor decks address. They are about layers vendor decks do not touch.

Legal and platform disclosure

Meta and TikTok both started flagging synthetic media in late 2025. Mandatory AI disclosure tags are rolling out through mid-2026. Pure-AI ads still run, but the categories where the disclosure tag visibly suppresses CTR are exactly the categories where AI UGC was supposed to be cheap leverage — supplements, skincare, fitness apps, finance education. The disclosure label reads as "this person did not actually use this product" to the viewer, which is the opposite of what UGC is supposed to signal.

Product physics that AI does not render

Skincare close-ups where the texture matters. Fabric drape on apparel. Hair products mid-application. Food shots where the steam, sizzle, or melt is the entire ad. Pet behavior. Anything with a baby or a toddler. AI avatars holding a product box are convincing at 9:16 thumb-scroll speed; AI avatars actually using the product in a way the product is supposed to be used are not. The categories where this matters are roughly half of consumer DTC.

Casting authenticity for high-trust verticals

Health, finance, supplements, parenting, religion, anything regulated. The "this is a real person who actually used the product" signal is not decorative. It is part of the conversion lift, part of the legal posture, and part of why the ad clears review. We have audited supplement accounts where switching from real UGC to AI UGC in the hero rotation cut conversion rate by 30–55% on identical media spend. Not because AI UGC is fake — because viewers in this segment specifically refuse to trust an avatar.

Where real UGC actually fails

Real UGC does not fail on quality. It fails on the structural properties that decide whether a creative engine compounds — and those are the properties AI UGC was built to fix.

- Cost. $300–$1,500 per finished video. Briefing 10 creators to find an angle costs $4,000–$15,000 before any media is bought. Most accounts cannot afford to test that way.

- Speed. 5–10 days from brief to first cut on a mid-tier creator, 2–4 weeks for boutique. The angle window in paid social is roughly 2–3 weeks from when an angle starts working to when it fatigues. By the time the second batch of real UGC ships, the angle is already burned.

- Briefing variance. Five creators given the same script produce five interpretations, two of which miss the brief entirely. The variance is part of what makes real UGC feel real, but it also means the angle test is contaminated by execution differences.

- Exclusivity. The creator can post the same product for a competitor 30 days later. Most $300–$500 deals do not buy exclusivity; the ones that do roughly double the price.

- Localization. Every new language is a new shoot. AI UGC re-renders for free; real UGC requires casting and travel.

- Iteration. A revision is a re-shoot. AI UGC iterates by regenerating; real UGC iterates by paying again.

None of these are quality complaints. They are structural reasons real UGC is the wrong layer for angle discovery. Treating it as the volume layer is what produces the "we burned $30k on creators and never found a winning angle" failure mode that lands in our inbox roughly twice a month.

What AI UGC and real UGC are actually good at

Side-by-side capability table — the operator-relevant axes, not the vendor-deck axes.

| Dimension | AI UGC | Real UGC | Winner |

|---|---|---|---|

| Cost per finished video | $0.50–$5 | $300–$1,500 | AI |

| Time to first cut | 5–15 minutes | 5–14 days | AI |

| Variants per week | 30–100 | 2–6 | AI |

| Revisions cost | $0 (regenerate) | $50–$300 + days | AI |

| Localization (per language) | $0 (re-render) | Re-shoot required | AI |

| Multi-product testing | Trivial | Linear-cost | AI |

| Authenticity ceiling | High but capped | Highest (real person) | Real |

| Trust signal in regulated verticals | Suppressed by disclosure | Strong | Real |

| Product-physics realism (texture, drape) | Weak | Strong | Real |

| Sustain past 6-week run | CTR fatigues fast | Holds 8–12 weeks | Real |

| Hero-spot suitability | Low | High | Real |

| Exclusivity / competitor block | N/A | Available (paid) | Real |

| Disclosure-tag risk on Meta / TikTok | Required by mid-2026 | None | Real |

The pattern that holds: AI UGC wins everywhere a creative engine needs leverage, and real UGC wins everywhere the brand needs trust. Layer them by job, not by preference.

The stacked playbook

The setup we install on client engagements as part of our AI Creative service. Three weeks to first compounding cycle; thereafter it runs in parallel.

Week 1 — angle discovery

- Brief generation. Pull the top 5 winning ads from the account history and the top 5 from competitor accounts. Identify 5–8 angle hypotheses (price, time-saving, social-proof, transformation, problem-aware, etc.). Write one 60-word script per angle.

- AI variant pass. Generate 5–6 variants per angle on Arcads or Creatify — different avatars, different hook reads, different first-3-second framings. Aim for 30 finished AI clips by end of week. See what is AI UGC for the platform mechanics.

- Ship to ad account. All 30 variants into Meta and TikTok with a $20–$40 daily budget per ad set. Let the algorithm sort.

Week 2 — winner identification + real UGC briefing

- Read the data. By day 10 the algorithm typically surfaces 1–3 angles with sustained CTR above the rolling account average. Tag the winners by hypothesis (price angle won, transformation angle lost, etc.).

- Brief real creators on the winning angles. Reach out to 3–5 real UGC creators on Insense, Billo, or direct outreach. The brief includes the AI clip that won as a reference (this is the leverage point — most agencies will not give creators an AI reference, so the brief is fuzzier than it needs to be).

- Continue AI testing on the next 5 angle hypotheses. The angle hunt does not stop. Generate the next 30 AI variants in parallel.

Week 3+ — winner scaling + parallel angle hunt

- Real UGC arrives, scales the winning angle. 3–5 real-creator versions of the winning angle ship into the ad account. These become the workhorse creatives for the next 8–12 weeks. The Facebook Ads management layer routes spend toward whichever real version performs.

- AI UGC keeps testing. The angle-discovery layer never stops. By week 6 you have a portfolio of 4–6 winning angles, each scaled with real UGC, with AI testing the next batch underneath.

- Hero rotation gets real, always. Launch ads, founder spots, the spot you put on the homepage — these are real UGC, full stop. AI does not enter this layer.

The shape is the point. AI alone hits hard, fades hard, and never finds the next angle in time because everyone is staring at the dashboard waiting for a refresh. Real alone holds longer per angle but cannot test enough hypotheses to keep the portfolio fresh. Stacked is the only line that holds because the angle-discovery and angle-scaling jobs are running in parallel, not in sequence.

Decision matrix — pick AI, pick real, or stack

| Situation | Pick |

|---|---|

| Angle discovery / hook testing / variant volume | AI UGC |

| Localization across 5+ languages | AI UGC |

| Concept-test before committing creator budget | AI UGC |

| Multi-product catalog with shared script | AI UGC |

| Below-$20-CPM SMB scaling on TikTok / Meta | AI UGC |

| Hero / launch ads | Real UGC |

| Health, finance, supplements, kids | Real UGC |

| Skincare close-ups, food shots, fabric drape | Real UGC |

| Founder spot / brand testimonial | Real UGC |

| Ads requiring competitor-exclusivity | Real UGC |

| DTC running 30+ ad variants a week | Stack — AI for angle, real for scale |

| Mid-market app with steady creative budget | Stack — AI for angle, real for scale |

| Series-A brand wanting CAC discipline + brand build | Stack — AI for angle, real for scale |

| Anything testing more than 5 angles per quarter | Stack — AI for angle, real for scale |

The default for almost any account doing real volume in 2026 is the stacked row. Single-source UGC strategies survive at the small end (under $25k/month media spend) and at the very high end (six-figure brand spots that all run real). Everyone in the middle — which is most of the market — gets to compounding economics by stacking.

What the performance studies actually say

Vendor-pitched performance numbers in this category should be read with caution. The studies that show AI UGC at "350% higher engagement on TikTok" are usually comparing AI UGC variants against bottom-quartile real UGC, which is a stacked deck. The honest summary across the studies that disclose methodology:

- AI UGC wins on engagement at the top of the funnel. Higher CTR, higher view-through, higher initial saves and shares. This is the angle-discovery signal — viewers respond to novelty and volume of variants, not to AI specifically.

- Real UGC wins on conversion rate further down the funnel. Lower bounce on the landing page, higher add-to-cart rate, higher checkout completion. This is the trust signal that converts the click into revenue.

- Stacked workflows win on both. Top-of-funnel CTR comparable to AI-alone; conversion rate comparable to real-alone. The studies that report stacked numbers (Cometly, Hoox, Superscale across 2025–2026) all converge on this.

The quoted "350% engagement lift" and "AI UGC delivers 6x ROAS" headlines come from pure-AI tests in app install and low-AOV consumables, where the trust gap is small and the volume gap is everything. They are real, but they are not the whole funnel. Anyone running a real e-commerce P&L knows engagement is not the unit; gross profit per customer is. Apply the studies to the layer they came from.

How this fits into a real ad creative engine

Standalone AI UGC is a tactic. Standalone real UGC is a tactic. The full creative engine — what we install on client engagements — has five layers, and the AI vs real question only makes sense inside the variant generation layer.

- Brief generation. An LLM that pulls winning ads from your account history and competitors and proposes 5–8 new angle hypotheses per week.

- Variant generation — AI layer. Arcads, Creatify, MakeUGC, HeyGen. 30+ AI clips per week against the angle hypotheses. See best AI video ad tools for the platform shortlist.

- Variant generation — real layer. 3–5 real creators per month briefed on whichever angles won the AI test. This is the workhorse layer.

- Brand-lock library. Approved scripts, voice tones, product references, and disclosure language. Every variant — AI or real — inherits from this.

- Performance feedback. Weekly readout that feeds the next brief generation pass. The system gets smarter the longer it runs.

Without the brief layer above and the feedback layer below, both AI and real UGC just produce clips. With them, you get a creative engine that compounds — and inside that engine, the AI vs real question becomes an internal layer assignment, not a vendor selection. We run a setup like this for clients as part of our AI Creative service and AI Stack Audit.

Where this is heading through 2026

Three patterns to watch through the rest of the year:

- AI disclosure tags become mandatory and visible. Meta and TikTok finalize synthetic-media labels by Q3 2026. The accounts that survive are the ones already running stacked, where the disclosure tag is on the angle-discovery layer (AI) and the scaling layer (real) carries the trust signal.

- Custom-avatar pricing keeps falling. Training a custom AI avatar of one of your real creators is $20–$50 in 2026. The line between AI UGC and real UGC blurs at the edge — you film the creator once, then re-render their likeness for new scripts. This is the hybrid pattern that lets one $400 shoot service 30 angle tests.

- The boutique real-UGC tier gains pricing power. Mid-tier creators ($300–$500) get squeezed by AI; boutique creators ($800–$1,500) with strong follower bases and exclusivity terms get more expensive, not less. The middle of the market hollows out.

The substitute framing — AI replaces real, or real beats AI — is the conversation everyone is having on Reddit and LinkedIn. The work that compounds is at a different layer: stack them, assign the right job to each, and stop arguing about which one converts better in isolation. Two quarters ahead, this is what the accounts holding CAC flat are doing while the rest of the market keeps picking sides.