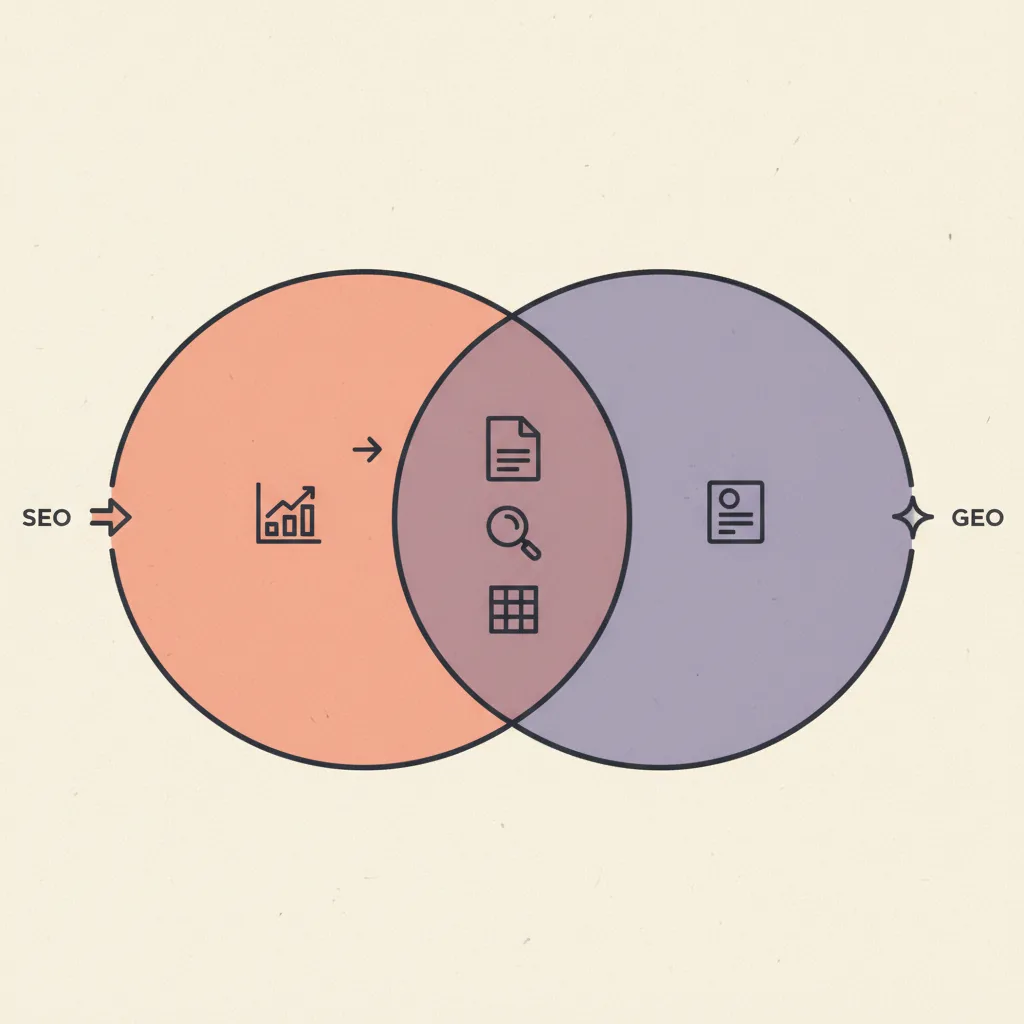

Every guide that covers GEO starts with the same frame: "SEO is dead, GEO is the new SEO." That frame is wrong on both ends. Google processes 13.7 billion searches per day. ChatGPT processes roughly 1 billion. SEO is not dead — it is the load-bearing structure that GEO optimization rests on. GEO is not "new SEO." It is a distinct optimization surface with a different signal set, a longer feedback cycle, and a different definition of success. Getting cited in a Perplexity answer is not the same job as ranking position 3 on Google. It requires different structural choices at the content level, and conflating the two produces content that does half the job for each platform.

This post covers GEO as a specific discipline: what it is, how the extraction mechanics work, what signals drive inclusion in AI-generated answers, and the content architecture decisions that make a knowledge post GEO-ready without requiring a separate writing workflow. We build and audit these systems for clients across our AEO/GEO cluster — the signal patterns below come from that work, cross-checked against published research from GEO Alliance and AuraMetrics (February–April 2026).

What is Generative Engine Optimization exactly?

Generative Engine Optimization (GEO) is the practice of structuring content, entity signals, and source authority so that AI systems with retrieval-augmented generation select your content as a cited source when synthesizing answers to user queries. The "generative" modifier refers to the synthesis step: unlike a traditional search engine that returns a list of links, a generative engine produces an original answer composed from multiple retrieved sources with attribution. GEO is the work of being one of those attributed sources. It differs from AEO (Answer Engine Optimization) in scope: AEO focuses narrowly on the extraction step — getting pulled into the answer. GEO includes the broader ecosystem of signals that determine whether an AI system considers your content a candidate at all: topical authority depth, entity grounding in the broader knowledge graph, and presence on the third-party platforms that AI training corpora draw from — Reddit, HN, Substack, LinkedIn, G2, and Capterra. AEO and GEO overlap heavily in tactics but GEO has a longer optimization cycle and a higher citation ceiling.

| GEO signal | What it affects | Time to result |

|---|---|---|

| Content freshness (<30 days) | 3.2x citation multiplier — fastest lever | 1–2 weeks after update |

| PAA-aligned FAQ schema | Extraction confidence from AI engines | 1–4 weeks |

| Citable passage length (134–167w) | Extraction quality — AI prefers self-contained blocks | 1–4 weeks |

| Topical authority depth (5+ H2s) | LLM treats content as authoritative reference, not stub | 4–8 weeks |

| Third-party brand mentions | Entity grounding — feeds LLM training and retrieval corpus | 3–6 months |

| Review site listings (G2, Capterra, etc.) | Listicle feeds — 3x stronger AI citation signal than backlinks | 3–6 months |

How do generative engines decide what to cite?

Generative engines retrieve candidate content through a combination of traditional search index signals and retrieval-augmented generation (RAG) pipelines. The content that enters the candidate pool is already filtered by some version of traditional authority, which is why GEO and SEO share the same content foundation. But the selection step from candidate pool to actual citation uses a different scoring model: relevance to the query entity, structural accessibility of the answer, freshness of the source, and internal coherence of the passage. GEO Alliance's April 2026 study found ChatGPT overlaps with Google's top-10 results only 14% of the time on the same queries, while Perplexity correlates with Google's top-10 at 91%. This divergence means optimizing for Google Overviews (which weight traditional authority heavily) and optimizing for ChatGPT (which weights entity relevance and structured content more heavily) are partially different jobs. The GEO content architecture targets the signals both engines share: structural depth, freshness, entity grounding, and self-contained citable passages.

The HN community thread on GEO from early 2026 surfaced a useful observation from an operator running 800 consumer queries: "AI answers shift a lot. In classic search a page-1 spot can linger for weeks; in our runs, the AI result set often changed overnight." The practical implication is that GEO content needs freshness as a recurring maintenance item, not a one-time setup. Pages that are not touched within 60–90 days lose citation pickup to more recently updated equivalents, even when the content itself is accurate. This is distinct from traditional SEO, where a well-ranked page can hold position for months without updates. Generative engines re-evaluate candidate sources frequently, and recency is one of the scoring signals. The cost of maintaining freshness is low — one substantive update per post per quarter is sufficient. The cost of ignoring it is losing citations to competitors who are doing the maintenance.

What does GEO-optimized content look like structurally?

GEO-optimized content is defined by five structural decisions made at the outline stage — decisions that serve both human readers and AI extraction engines from the same draft. These decisions are not about adding length; they are about adding structure at the right level. A 2,000-word post that makes all five decisions will outperform a 4,000-word post that makes none of them, on every AI search platform that evaluates content for citation pickup. The decisions below are ordered by impact. If you can only implement two, start with topical depth and citable cluster construction — they carry the most weight in extraction scoring.

Decision 1 — Topical depth over breadth

A 1,200-word post covering ten aspects of a topic at 120 words each produces ten thin passages that no AI extractor can quote without paraphrasing. A 2,000-word post covering five aspects at 350 words each, each section opening with a direct answer followed by evidence, produces five citable blocks. GEO rewards depth on fewer questions over breadth on many. The minimum for a knowledge post that targets AI citation: five H2 sections, each with an opener paragraph of 120–160 words that fully answers the heading question. The breadth trap is seductive because covering more questions feels more comprehensive. But AI extractors are not scoring comprehensiveness — they are scoring whether each individual passage can stand alone as a direct answer. A post that covers three questions thoroughly produces more citation opportunities than a post that mentions twelve questions superficially.

Decision 2 — H2 headings as direct questions or claims

AI extraction engines score H2 headings as query anchors. A heading phrased as a question ("How do generative engines decide what to cite?") signals to the extractor that the following passage is a direct answer to that question — the exact format AI Overviews and Perplexity are looking for. A heading phrased as a label ("The technical architecture") does not carry that signal. More than 50% of H2s should be questions or direct claims for GEO-optimized posts. The practical audit: paste your H2 list and count how many could function as a search query a real user would type. If fewer than half can, convert the label headings to question form. "What does GEO-optimized content look like structurally?" outperforms "GEO content structure" on AI extraction scoring even when the paragraph content is identical underneath.

Decision 3 — Citable cluster construction

The citable cluster is the atomic unit of GEO. It is a single H2 section where the opener paragraph (120–160 words) fully answers the heading question, followed by supporting evidence in bullet lists or a table. The opener is what gets extracted and cited. The supporting evidence is what validates the opener for the human reader. When reviewing a post for GEO readiness, count the number of citable clusters — contiguous runs of paragraphs between headings that land in the 134–167 word range. More clusters in that range means higher citation pickup probability. The fastest way to fail the cluster test: write a one-sentence H2 opener, then immediately drop into a bullet list. The bullet list breaks the cluster and the opener paragraph is 15 words — not citable. The fix is adding a full 120–160 word direct-answer paragraph before the bullet list, treating the list as supporting detail rather than the answer itself.

Decision 4 — Named entity grounding

AI extraction models score content higher when the claims are grounded in named, verifiable entities: specific companies, specific tools, specific studies with dates and sources. "AI tools are growing fast" is not extractable as a citation. "ChatGPT crossed 1 billion daily queries in 2026 while Google still processes 13.7 billion — the gap is narrowing but Google is not dead yet" is extractable and attributable. Every factual claim in a GEO-optimized post should have at least one named entity anchor: a company name, a tool name, a dated source, or a specific number. Entity grounding also helps AI systems decide how to attribute the citation. A passage that references GEO Alliance (April 2026) is easier to attribute than one that says "recent research shows." The attribution chain from passage to source to brand is part of what makes the citation appear in AI search results with your brand name attached.

Decision 5 — FAQ as PAA mirror

The FAQ section is the highest-concentration source of citable passages in a knowledge post. Four to six questions aligned verbatim to the People Also Ask questions for the target keyword, each answered in 2–4 specific sentences, produces four to six pre-validated extraction candidates. PAA wording is what users actually search; AI systems are optimized to answer those exact phrasings. Using PAA wording verbatim in the FAQ is the fastest single move to improve citation pickup on an existing post. The FAQ answers are also the passages AI systems most often pull for voice search and quick-answer panels, where the extraction window is tighter. A 60-word FAQ answer that directly responds to the PAA question text is more extractable than a 200-word discursive response to the same question — FAQs are one of the few places where shorter is better for citation density.

Where GEO differs from AEO — and when it matters

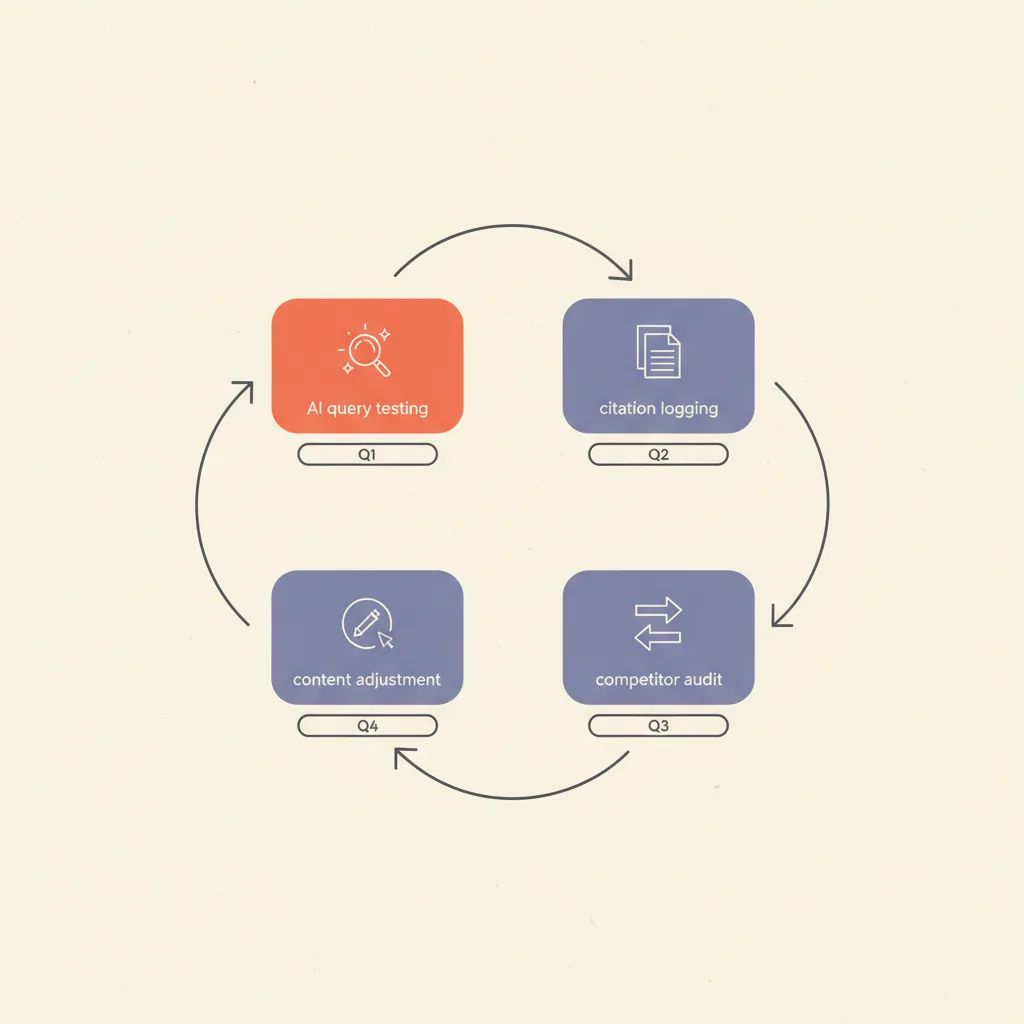

AEO is a faster-cycle optimization against a narrower target — getting extracted by existing AI search tools. GEO includes that work and extends it into the longer-cycle, higher-ceiling effort of building brand presence across the third-party platforms AI training data draws from: Reddit threads, Substack posts, LinkedIn articles, and review site listings. What is AEO covers AEO's specific levers (schema validation, PAA alignment, freshness) in depth. The practical difference for operators deciding how to allocate effort: start with AEO for the first 60 days of any new content program — schema, PAA FAQ, citable clusters are all fast and cheap. Treat GEO's third-party presence work as the 6–12 month compounding layer that sets the citation ceiling for competitive queries.

If you are a marketing agency or operator running a content program today, AEO moves are the first 60 days: schema validation, PAA FAQ alignment, citable cluster length review. GEO moves are the ongoing 6–12 month work: getting listed on the review platforms the AI corpora draw from, building community presence on Reddit and HN in the relevant subreddits, earning mentions in Substack newsletters that cover your space. The pipeline skill that covers this in the broader context of AI search is how to get cited by AI — the GEO/SEO guide.

Measuring GEO — what to track and what to ignore

GEO tracking uses three proxies: direct AI Overview citation appearances (trackable via Semrush AI or SE Ranking's AI Overview tracker), Perplexity citation appearances (trackable via manual query testing or third-party GEO trackers like Scrunch AI and Otterly.ai), and brand mention growth across the platforms AI corpora draw from. None are as clean as organic rank tracking, but all three are actionable. The key challenge is attribution: when your brand appears in a Perplexity answer, the user may not click through, and revenue attribution requires a longer measurement window than organic search. The business case for GEO is brand presence at the moment of consideration — being the cited authority when a prospect is forming an opinion, at the 25–30% of queries where AI Overviews now appear and no click occurs.

| Metric | Signal quality | How to track |

|---|---|---|

| AI Overview citation appearances | High — direct citation pickup signal | Semrush AI, SE Ranking AI tracker, manual check |

| Perplexity citation appearances | High — strongest non-Google signal | Manual query testing by keyword; Perplexity API (beta) |

| ChatGPT citation appearances | Medium — less transparent attribution | Manual testing; not trackable at scale yet |

| Organic AI-referred traffic (GA4) | Medium — attribution still incomplete | GA4 source/medium filter for AI referrers |

| Brand mention growth (3rd party) | High — entity signal proxy | Brand24, Mention, or manual Reddit/LinkedIn monitoring |

| AI search share of voice | High if you can benchmark it | Third-party GEO trackers (Scrunch AI, Otterly.ai) |

| AI search CTR | Low — most AI answers resolve without a click | Not reliably measurable at this stage |

The honest picture: GEO measurement in 2026 is where SEO measurement was circa 2010. The signals exist, the tooling is fragmented, and the attribution is imperfect. We recommend a lightweight quarterly audit — run 10–15 key queries manually in ChatGPT, Perplexity, and Google AI Mode; note which of your posts appear as citations; track the count over time. Simple, imperfect, and more actionable than waiting for perfect tooling. The signal worth watching closest is not your own citation count but the competitor citation share: which domains are appearing as citations on the queries you want to own. That tells you both the ceiling (who has achieved GEO traction) and the gaps (which queries are underserved by high-quality cited content and therefore most approachable).

GEO in the broader AI search cluster

GEO is one of three overlapping AI search optimization surfaces. Answer Engine Optimization (AEO) covers the extraction layer — getting pulled into AI-generated answers from existing retrieval pipelines. GEO covers the broader synthesis and corpus layer — building the brand entity presence that feeds both retrieval models and training data. AEO vs SEO maps the tactical divergences for operators running combined programs. The implementation guide that ties all three together is how to get cited by AI — the GEO/SEO guide — the step-by-step playbook that follows from what this post explains.

The architecture we build for clients runs all three surfaces off the same content workflow: one post, one research pass, one quarterly freshness review, with structural decisions (PAA FAQ, citable cluster length, H2 as questions) baked into the brief template. The overhead of running GEO and AEO alongside SEO is one additional checklist step at the draft stage and one schema validation step before publish. The return is citation coverage across the growing share of queries that never click through to a blue link.