A client running a content program for a B2B SaaS product found their content appearing in Perplexity citations within three weeks of a schema validation and PAA FAQ pass on their top 12 posts. Same content, same organic ranking, different extraction results. The only change was structural: broken JSON-LD fixed, FAQ sections rewritten to match verbatim PAA wording, H2 opener paragraphs expanded to the 130–160 word citable range. The work took one person one afternoon per post. Twelve posts, two weeks of elapsed calendar time.

That pattern is consistent enough that we build it into every content audit engagement as the first pass before any deeper SEO work: fast-cycle structural changes on existing content, producing measurable citation pickup. This guide covers the full implementation: what each AI engine actually looks for, the four-lever stack in order, and the longer-cycle off-page work that sets the citation ceiling for competitive queries.

How each AI engine selects sources — they are not the same

ChatGPT, Perplexity, and Claude use different retrieval architectures, and the differences are large enough to change which optimization moves are worth making. Perplexity's cited sources correlate with Google's top-10 results at 91% — traditional SEO covers it, with freshness and schema as the marginal additions. ChatGPT's citations overlap with Google's top-10 only 14% of the time, which means backlink authority and organic rank are weak predictors of ChatGPT citation pickup. Claude weights structured synthesis sources and topical depth. Microsoft Copilot follows Bing authority signals, which largely parallel Google. Treating all five as a single optimization target produces content that is partially correct for each platform and fully correct for none. The shared signal set — content depth, valid structured data, freshness, and PAA-aligned FAQ — covers 60–70% of citation pickup across all engines. The remaining lift requires engine-specific work.

| Engine | Correlation with Google top-10 | Primary citation signals | What this means operationally |

|---|---|---|---|

| Perplexity | 91% — very high | Traditional search authority + freshness + structured data | SEO-optimized content covers most of it. Add freshness + schema. |

| Google AI Overviews | ~78% — high | Traditional authority + E-E-A-T + featured snippet eligibility | SEO foundation is critical. Schema + FAQ structure matters. |

| Microsoft Copilot | ~62% — moderate | Bing authority signals + entity relevance | Similar to Perplexity but weight toward Bing authority, not Google |

| Claude | ~45% — moderate | Structured synthesis sources + topical depth + coherence | Rewards depth and internal coherence over backlink count |

| ChatGPT | 14% — low | Entity density + content structure + training data presence | Traditional SEO authority matters least here. Entity grounding and structure dominate. |

The practical implication: a single content optimization pass can capture 60–70% of citation pickup across all five platforms if it correctly addresses the signals they share — content depth, structured data, freshness, and PAA-aligned FAQ. The remaining 30–40% requires platform-specific work, primarily the third-party presence signals (Reddit, G2, LinkedIn, Substack) that feed ChatGPT's entity corpus and are largely irrelevant for Perplexity. Most operators should start with the shared signals and capture the 60–70% first. The platform-specific work compounds over months; the shared signal work returns within weeks. Running both in parallel is ideal if bandwidth allows, but when bandwidth is constrained, the four-lever stack on existing content is the correct first priority over third-party presence building.

The four-lever stack — in the right order

The order matters more than most AEO guides acknowledge. Lever 4 (freshness) applied to a post with broken schema wastes the freshness signal because the extraction engine cannot reliably read the page structure. Lever 3 (citable cluster construction) applied before lever 2 (PAA FAQ alignment) produces well-structured content that answers the wrong questions. The correct sequence: fix schema so extraction works at all, then set the PAA FAQ so extraction finds the right question-answer pairs, then tune cluster length so those pairs are in the AI-preferred 134–167 word range, then maintain freshness so the page stays in the candidate pool. Skipping step 1 is the most common failure mode in AEO programs — schema validation is unglamorous work that gets deferred until a practitioner runs a batch audit and discovers that half their JSON-LD is silently broken.

Lever 1 — Schema audit and validation

Broken or absent JSON-LD schema is the most common silent failure in AEO, and the most common reason a technically solid post never appears in AI citations. It does not produce errors — it simply stops working. The extraction engine cannot reliably identify FAQ question-answer pairs, article metadata, or breadcrumb structure when schema is malformed. Before any other optimization, validate every knowledge post's JSON-LD using Google's Rich Results Test or a batch crawler such as Screaming Frog or Sitebulb. The most common failure modes are: FAQPage schema with question text that does not exactly match the rendered HTML (often caused by entity encoding differences or trailing whitespace), Article schema missing the `dateModified` field (which kills the freshness signal), and BreadcrumbList entries pointing to URLs that return 404. All three are invisible to the human reader and invisible in your CMS until you run the validator.

What to validate and fix:

- Article schema — required on every knowledge post. Includes `headline`, `datePublished`, `dateModified`, `author`, and `publisher` with `@type: Organization`. Missing `dateModified` is a common silent failure that kills the freshness signal.

- FAQPage schema — required on every post with a FAQ section. Each FAQ entry needs both `Question` and `Answer` with exact text match to the rendered HTML. Mismatches between schema text and visible text are flagged by Google's validator and by AI crawlers.

- BreadcrumbList schema — helps AI engines understand the site's topical hierarchy. A knowledge post on AEO that is nested under `/solutions/aeo-geo/` in breadcrumbs signals topical authority in that space.

Cost: zero beyond validation tool access. Time: 15–30 minutes per post for a manual fix pass; 2–4 hours for a full batch audit with automated tooling. This is the unglamorous prerequisite for everything else in the stack. The reason it gets skipped: schema validation does not feel productive in the way that writing new content does. There is nothing to show at the end of an hour of validation work — just a fixed invisible layer that the human reader never sees. But the return on that hour is higher than writing a new post on a site where half the existing schema is broken, because the broken schema is actively suppressing citation pickup on content you have already invested in.

Lever 2 — Verbatim PAA FAQ alignment

People Also Ask questions are the most direct signal available for what AI answer engines expect to find on a page about a given query. Google surfaces PAA based on what users actually ask after seeing the main result — these are the sub-questions the AI answer engine is trained to resolve. When a FAQ section uses PAA wording verbatim, the AI extraction engine scores that passage at a higher confidence level because the question-answer pair is pre-validated against real user behavior. The key mechanic: Google's AI Overview extraction engine has already calibrated against PAA wording when deciding what a "good answer" to a query looks like. A FAQ answer written in response to a verbatim PAA question is, by construction, the format the engine is looking for. You are not trying to game the signal — you are responding in the exact format the extractor was designed to process.

The implementation:

Expected result: measurable improvement in AI Overview and Perplexity citation pickup within 2–4 weeks of deploy on posts with strong organic rankings. Posts with weak organic rankings benefit less in the short term — PAA alignment on a page the AI engine cannot find in its candidate pool is a partial fix. The sequencing advice: apply PAA FAQ alignment to your top 10 organic pages first. These are already in the candidate pool; you are upgrading their extraction eligibility. After those show results, move to mid-ranking pages and apply the same pass. New content gets PAA alignment at the draft stage — it is a zero-marginal-cost addition to the content brief when done before writing rather than as a retrofit.

Lever 3 — Citable cluster construction

The citable cluster is the structural unit that AI extraction engines quote. It is a contiguous run of text, typically one dense paragraph of 134–167 words, that fully answers the section's heading question without requiring surrounding context. AI extractors prefer this length range because it is long enough to be self-contained and short enough to quote without paraphrasing so heavily the attribution loses meaning. The cluster measurement is based on the contiguous paragraph run between any two structural interruptions (headings, bullet lists, tables, figures). A single 150-word paragraph is one cluster. Two 80-word paragraphs separated only by a line break are one 160-word cluster. Two 80-word paragraphs separated by a bullet list are two 80-word clusters — both too short to be reliably citable. This structural detail matters for writing choices: where you put bullet lists and tables determines how your paragraph clusters measure.

Most posts fail this test at the H2 opener level: they open H2 sections with 40–80 word scene-setting paragraphs that explain what the section will cover rather than directly answering the heading question. The fix:

- Write H2 openers as direct answers — the first sentence of the H2 opener should state the full answer to the heading question. The following sentences should provide evidence, specificity, and context. The opener paragraph is what gets extracted; the following paragraphs are what keeps the human reader engaged.

- Target 120–160 words per H2 opener — review draft posts and count the words in each H2 opener. Openers under 100 words need expansion; openers over 180 words should be tightened by moving context to following paragraphs.

- Check for self-containment — read each H2 opener in isolation. If it makes sense without surrounding context and directly answers the heading question, it is extractable. If it requires "as mentioned above" or assumes context from a previous section, it is not.

Lever 4 — Freshness maintenance

Content updated within the last 30 days receives 3.2x more AI citations than equivalent content that has not been touched, per GEO Alliance's April 2026 study (source: learn.geoalliance.co). The mechanism is practical: AI crawlers re-index updated content, which moves it forward in the candidate pool recency filter. 85% of AI citations reference material published or updated within the last two years; content older than 18 months drops sharply in citation pickup regardless of quality. The most efficient freshness maintenance approach for a large content base is a tiered calendar: high-traffic posts get a quarterly review, mid-traffic posts get a bi-annual review, and low-traffic posts get an annual review. The quarterly and bi-annual reviews do not require rewrites — they require one substantive change and a schema date update per post, which is 15–20 minutes of work if the change is targeted.

The quarterly freshness maintenance process:

- Audit — identify posts older than 90 days with no update. Priority: posts targeting keywords with active AI Overviews or Perplexity citation appearances.

- Update — make at least one substantive change per post: correct a tool pricing update, add one new statistic from the last 30 days, update a recommendation based on a tool that shipped new features.

- Bump dates — update `dateModified` in both the post data and the `Article` JSON-LD schema. The date signal is read directly by AI crawlers; a content update without a schema date update only partially triggers the freshness signal.

- Validate — re-run the Rich Results Test after any schema date change. A schema date bump that introduces a formatting error silently cancels the freshness benefit.

Engine-specific playbooks

The four-lever stack is the foundation. Once it is in place, the engine-specific playbook addresses the remaining platform-specific gaps. Each engine has idiosyncratic signals beyond the shared foundation — signals that reflect the different retrieval architectures described at the top of this post. The table below maps the supplemental moves per platform, in order of time-to-result. None of them are worth running before the foundation is solid; they are marginal improvements on top of a working base, not substitutes for it.

| Engine | Additional signals | What to do | Time to result |

|---|---|---|---|

| Perplexity | Traditional Google SEO authority | Standard SEO: on-page optimization, internal link cluster, organic rank improvement | 4–12 weeks |

| Google AI Overviews | E-E-A-T signals: authorship, org trust | Add author schema, link to About page, get cited by .edu/.gov or major publications | 4–12 weeks |

| ChatGPT | Entity grounding in training corpus | Get mentioned on Reddit (relevant subreddits), HN, Substack, LinkedIn articles with named brand attribution | 3–6 months |

| Claude | Structured synthesis sources + depth | Write posts at 1,800+ words covering a topic end-to-end with named sources and specific numbers | 4–8 weeks |

| Microsoft Copilot | Bing authority signals | Verify Bing Webmaster Tools indexing; optimize for Bing (largely overlaps with Google SEO) | 4–8 weeks |

The third-party presence stack — the 6-month compounding investment

The fastest four levers operate on content you already own. The highest-ceiling lever is third-party brand mentions across the platforms AI training corpora draw from — and that requires building presence outside your own domain. Review site research by GEO Alliance (April 2026) shows third-party mentions correlate with AI citation pickup roughly 3x more strongly than backlinks. This is counterintuitive for operators with established SEO programs, but the mechanism is clear: AI training data includes Reddit, HN, Substack, LinkedIn, G2, and Capterra at far higher relative density than the average web page. Brand presence on those platforms feeds entity grounding in a way a homepage backlink from a small blog does not.

The third-party presence checklist, in order of accessibility:

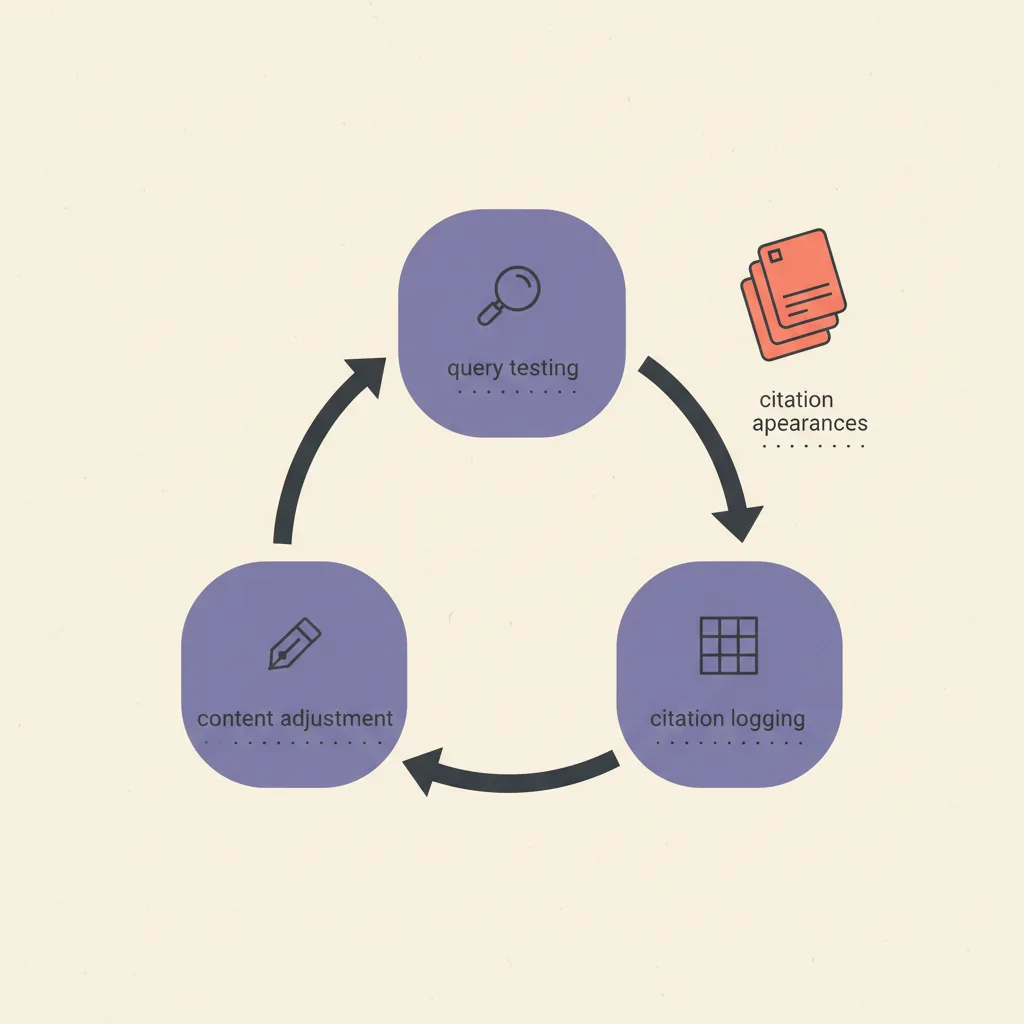

Measuring citation pickup — what to track

Measuring GEO results is less mature than measuring SEO results, but there are enough proxies to run a useful tracking workflow without enterprise tooling. The core challenge is that most AI citation appearances do not generate a click — the user gets the answer and moves on without clicking through to your page. This means organic traffic metrics will not reliably capture GEO performance. The signal you need to track is citation appearances themselves: how often does your content appear as a named source in AI-generated answers? The table below maps the best available tracking methods by platform. None are perfect; the combination of manual testing, AI Overview monitoring, and brand mention alerts covers 80% of what matters.

| Platform | Tracking method | Tool | Effort |

|---|---|---|---|

| Perplexity | Most trackable — test target queries manually | Manual query testing; Perplexity API (beta) for batch | Low — 15 min/week |

| Google AI Overviews | Semrush AI or SE Ranking AI tracker | Semrush, SE Ranking | Medium — needs paid tool |

| ChatGPT | Manual testing; no batch API yet | Manual ChatGPT queries on target terms | Low — 20 min/week |

| Claude | Manual testing; no public tracker | Manual Claude queries on target terms | Low — 15 min/week |

| All engines | Brand mention monitoring (3rd party) | Brand24, Mention, Google Alerts | Low — set alerts once |

| All engines | AI-referred traffic in GA4 | GA4 source/medium filter | Low — filter setup once |

The weekly tracking workflow we use: run 10–15 priority queries manually in ChatGPT, Perplexity, and Google AI Mode; log which posts appear as citations; track the count over time in a spreadsheet. Imperfect, but 30 minutes per week and more actionable than waiting for perfect tooling. A monthly trend line of citation appearances across 15 queries is enough to see whether the four-lever stack is working. The leading indicators to watch in the first 4–8 weeks: Perplexity citation appearances (fastest-moving indicator, correlates with Google rank) and AI Overview appearances on your top keywords (requires a paid tool but provides the clearest signal on Google-side AEO progress). ChatGPT citation pickup is the slowest to move and the hardest to track, but it compounds — once your entity presence is established in the training corpus, it persists.

How this connects to the AEO/GEO cluster

This post is the implementation layer of the AEO/GEO cluster at digicore101. The conceptual foundations are covered in three sibling posts: what is AEO explains the AEO signal stack in depth, generative engine optimization explained covers the GEO architecture and measurement, and AEO vs SEO maps the tactical divergences for operators running combined programs. This post is where you act on what those three posts explain. The four levers in this post are sequenced for a reason: each one unlocks the next. Schema must work before extraction reads FAQ. PAA FAQ must be set before citable clusters are tuned. Citable clusters must be present before freshness maintenance delivers full return. Do not run them out of order — and do not stop after just the first two. The compounding value in this stack comes from having all four levers active simultaneously.

For the full implementation as a managed engagement (schema audit, PAA alignment pass, citable cluster review, freshness calendar, and third-party presence outreach), see the AEO/GEO service page. The typical engagement for an operator with 20+ published posts is 4–6 weeks for the four-lever pass on existing content, plus a quarterly cadence for freshness and presence growth. The self-service path for operators with in-house content capacity: apply the four levers to your top 10 posts in the first month, then add the third-party presence checklist as a parallel ongoing effort. Most operators who complete that sequence see measurable citation pickup within 30–60 days on Perplexity and AI Overviews, with ChatGPT pickup compounding over the following 3–6 months.