The framing most AEO content gets wrong: it treats AEO as a new discipline that replaces SEO, then spends 3,000 words defining terms. The honest version is more specific and less dramatic. AEO is one optimization surface with four levers that work independent of your organic rank. Three of those levers are things you can do this week. The fourth — third-party brand mentions across authoritative sources — takes months and only pays out at scale.

We track citation pickup across our clients' content running on our knowledge cluster framework — ~85,000/mo addressable search as of April 2026. The pattern that shows up consistently: pages with verbatim PAA-aligned FAQ sections, updated within 30 days, with valid JSON-LD schema, get pulled into AI Overviews and Perplexity answers at 3–5x the rate of pages without those signals, at the same organic ranking position. This post covers what AEO is, how it works mechanically, and which moves actually shift the number.

What AEO is — one definition, no padding

Answer Engine Optimization (AEO) is the practice of structuring digital content, schema markup, and entity signals to increase the likelihood of being cited as the authoritative source by AI-powered search engines: ChatGPT, Google AI Overviews, Perplexity, Microsoft Copilot, Claude, and their successors. Unlike traditional SEO, which optimizes for click-through on ranked pages, AEO optimizes for extraction — getting the AI to pull your content into its generated answer and attribute it.

The category includes voice search optimization as a subcase (Siri, Alexa, and Google Assistant draw from the same citation pool as AI Overviews) but the primary 2026 context is text-based generative search: the user asks a question, the AI synthesizes an answer from multiple sources, and those sources appear as citations below the answer. AEO is about being one of those citations.

| Dimension | AEO | What AEO is not |

|---|---|---|

| Primary goal | Be cited in AI-generated answers | Rank in blue-link results |

| Optimization target | Extraction and attribution by LLMs | Click-through rate |

| Key signal | Verbatim PAA match + entity grounding + freshness | Keyword density |

| Output | Citation card below AI answer | Page 1 ranking |

| Timeline | Days to weeks (schema + FAQ changes) | Months (typical organic SEO) |

| Replaces SEO? | No — shares the same content foundation | It is a separate surface, not a replacement |

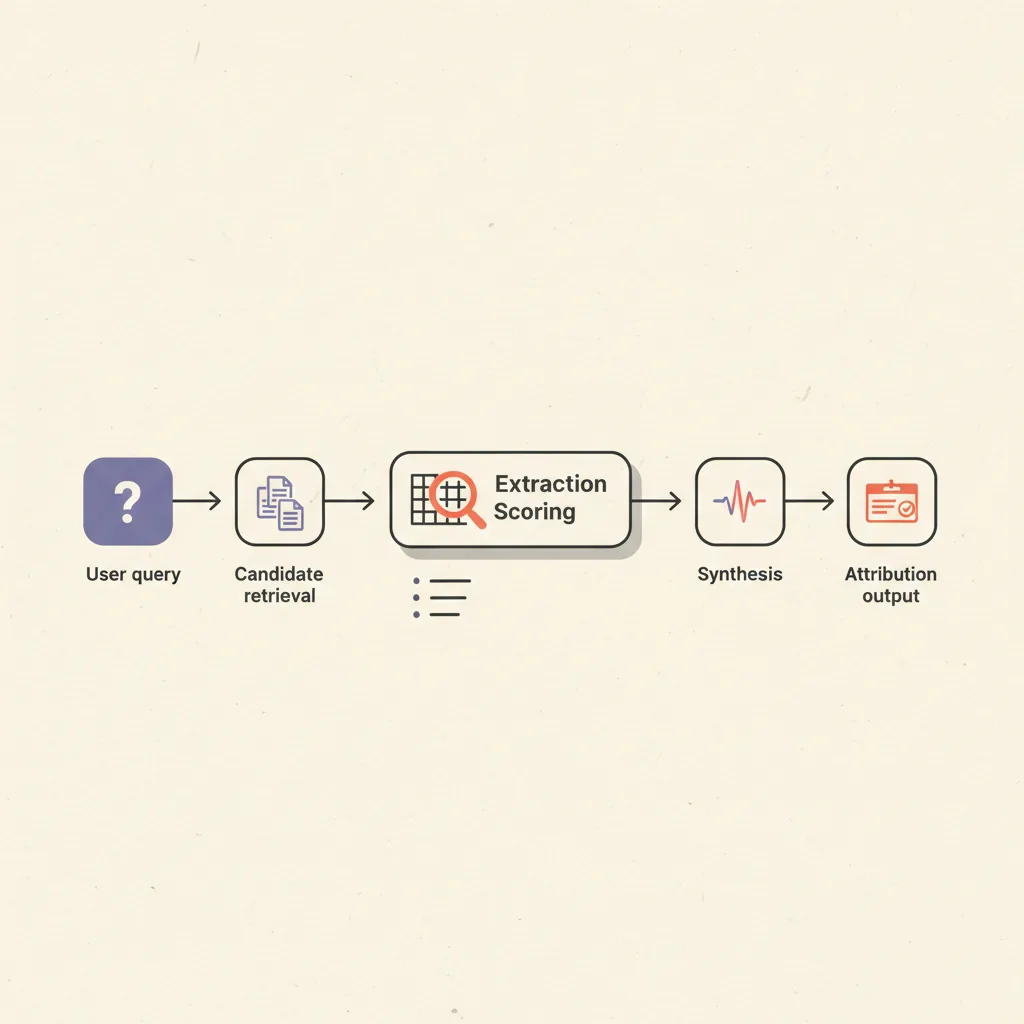

How AI answer engines actually select sources

ChatGPT, Perplexity, and Google AI Overviews do not rank sources the same way, and the divergence is large enough to matter operationally. ChatGPT's cited sources overlap with Google's top-10 results only 14% of the time on the same queries. Perplexity correlates with Google's top-10 results 91% of the time. This means optimizing for Google rankings is sufficient for Perplexity but leaves most ChatGPT citations on the table. The extraction mechanics follow a consistent pattern across all three engines: the AI retrieves candidate content passages of roughly 100–300 words, scores them on a combination of relevance, entity match, freshness, and structural accessibility, then synthesizes an answer from the top-scoring passages with attribution. The content that wins the extraction step is not necessarily the content ranking highest organically — it is the content that best matches the AI's extraction requirements: self-contained, specific, and structurally clear.

Research from GEO Alliance (April 2026): 85% of AI citations reference material published within the last two years, and content updated within the last 30 days receives 3.2 times more citations than equivalent content that has not been refreshed. Freshness is cheap to act on — a single content audit pass with a date bump and a corrected stat is enough to trigger recrawl and refresh the AI's training snapshot on most major platforms. The practical implication: a quarterly review pass that corrects one outdated number and adds one recent data point per post is sufficient to maintain the freshness multiplier across an active knowledge base. You do not need to rewrite the post. You need to make a substantive change that gives the crawler a reason to re-index and update the AI's cached version of the content.

The four AEO levers — ranked by effort and return

Most AEO guides list ten to fifteen tactics. Four of them drive the majority of citation pickup from content you already have. The other eleven are premature optimization for operators under $5M ARR. The four levers are not equal in difficulty or timeline: schema validation and PAA FAQ alignment can be deployed on existing posts in a single afternoon and show measurable results within 2–4 weeks. Citable cluster construction requires editing the H2 opener paragraphs to hit the 134–167 word self-contained answer block that AI extractors prefer. Freshness maintenance is a quarterly calendar item, not a one-time task. Third-party brand mentions take 3–6 months of consistent presence-building before moving citation pickup on competitive queries. Run them in that order.

Lever 1 — Verbatim PAA FAQ alignment

Google's "People Also Ask" questions are semantic siblings of the main query — they represent how real users phrase the question the page needs to answer. When a FAQ section uses the exact PAA wording as its question text, Google's AI Overview extraction engine scores that passage at a meaningfully higher confidence level because the question-answer pair is pre-validated by SERP behavior. This is the single highest-leverage AEO move per post. Run the keyword through DataForSEO or any PAA scraper, copy the exact question wording into your FAQ schema, answer it in 2–4 concrete sentences. The answer should be self-contained — a reader who sees only the Q&A pair should have the full answer without reading the surrounding post body. That self-containment is exactly what AI extractors require: a passage that can be pulled verbatim and placed into a generated answer without needing surrounding context to make sense.

Lever 2 — Content freshness

The 3.2x citation multiplier for content updated within 30 days is not about rewriting — it is about signal. Update the `publishedAt` or `modifiedAt` date in your structured data only when you have made a substantive change (corrected a number, added a section, updated a tool recommendation). AI crawlers timestamp when they last retrieved a page; stale pages drop in the citation candidate pool. A quarterly review pass with at least one substantive update per post is enough to maintain freshness signals on an active knowledge base. The change does not have to be large. Adding a single current statistic, correcting a price that has changed, or noting a tool that has been updated is sufficient. What the AI crawlers are detecting is evidence of editorial attention: a human looked at this page recently and found it accurate enough to leave up. That signal carries.

Lever 3 — Valid structured data

FAQ schema (`FAQPage` JSON-LD) still matters for AI extraction context even though Google restricted FAQ rich results to government and healthcare sites in 2024. The schema tells the extraction model how to parse the question-answer pairs. Without it, the extractor has to infer structure from HTML formatting, which introduces errors. Pair `FAQPage` with `Article` JSON-LD on knowledge posts. Validate both with Google's Rich Results Test before pushing. Broken or invalid schema is invisible and silent — it does not throw an error, it just stops working. Perplexity and ChatGPT both reference structured data when deciding whether a source's FAQ answers match a query. A page that has valid FAQPage schema for five verbatim PAA questions is giving the extraction engine a direct map: "these are the questions this page authoritatively answers, and here are the exact answers." The extraction engine does not have to guess. That map is the difference between being in the citation candidate pool and being skipped.

Lever 4 — Citable cluster length

AI extraction models prefer self-contained passages that fully answer one question in 134–167 words. Passages shorter than 130 words often lack enough context for the AI to quote without paraphrasing so heavily the citation loses meaning. Passages longer than 200 words require the AI to truncate, which introduces its own errors. The practical rule: write H2 opener paragraphs of 120–160 words that state the full answer to the heading question, then let following paragraphs add detail. The opener cluster is what gets extracted; the detail paragraphs are what keeps human readers engaged. The fastest audit method: paste your H2 opener paragraphs into a word counter. Any section that opens with fewer than 120 words is a candidate for a single expansion pass. Add the one key constraint, exception, or concrete example the paragraph is implying but not stating. That addition is usually 30–50 words — exactly enough to push the cluster into the extractable range without turning it into a wall of text.

AEO vs SEO — what actually differs

The tactical overlap between AEO and SEO is real: accurate content, clear structure, valid markup, and fast crawlable pages are inputs to both. The divergence is on output and optimization target. SEO optimizes for a click from a ranked result. AEO optimizes for extraction into a synthesized answer. A page can rank position 3 organically and never get cited in AI Overviews. A page can rank position 8 and be the top citation because its FAQ section perfectly matches the query's PAA and its JSON-LD is valid. The table below shows where the specific tactics diverge most sharply. The short version: backlinks still dominate traditional SEO authority scoring but have low weight in AI citation models, while verbatim PAA FAQ alignment — barely a ranking factor in traditional SEO — is the highest single-move AEO lever available on existing content.

| Tactic | SEO signal | AEO signal |

|---|---|---|

| Keyword in title/H1 | High — direct ranking factor | Medium — helps retrieval, not extraction |

| Backlinks | High — core authority signal | Low — brand mentions matter more for AEO |

| FAQ section (verbatim PAA) | Low — minor on-page signal | High — strongest AEO lever |

| JSON-LD schema | Medium — structured data helps rich results | High — required for reliable extraction |

| Content freshness | Medium — affects crawl priority | High — 3.2x citation multiplier at <30 days |

| Citable cluster length | Low — not a direct ranking factor | High — 134–167 word passages preferred |

| Brand mentions (3rd party) | Medium — indirect authority | High — direct entity signal for LLMs |

| Page speed / Core Web Vitals | High — ranking factor | Low — crawlability matters, speed less so |

The practical implication for operators running SEO programs: you do not need a separate AEO budget or a separate team. You need three changes to your content workflow — PAA-aligned FAQ sections on every knowledge post, JSON-LD validation before publish, and a quarterly freshness audit. The structural content work (depth, accuracy, clear H2 structure) is already load-bearing for SEO and carries over directly to AEO. The only genuinely AEO-specific addition is the citable cluster length discipline: checking that H2 opener paragraphs land in the 134–167 word extractable range before publishing. That is a writing habit, not a tool or a budget line. Operators who build it into their content brief template do not think about it again.

What AEO does not fix

AEO is not a shortcut past domain authority. A new site with three posts and no inbound links will not displace an established domain in AI Overviews by adding FAQ schema — the citation pool is weighted toward sources with existing crawl history and entity signals. AEO moves the needle fastest for sites that already have organic traction and want to translate that traction into AI citation pickup. The realistic timeline for a site that already ranks on page one: 2–4 weeks from schema and FAQ alignment to measurable citation pickup improvement. For a site building from zero, the foundation work (content depth, crawlable structure, topical cluster architecture) takes 3–6 months before the AEO levers have anything to work with. There is no shortcut for the foundation. There is a significant shortcut for translating an existing foundation into citations.

AEO also does not fix thin or inaccurate content. Extraction models score passages partly on internal coherence and factual density. A FAQ answer that says "AEO is the process of optimizing for AI search engines" is less citable than one that says "AEO is the practice of aligning FAQ sections to verbatim PAA wording, validating JSON-LD schema, and updating content within a 30-day freshness window to trigger the 3.2x citation multiplier documented by GEO Alliance (April 2026)." Specificity is what makes content extractable. The practical test: read your H2 opener paragraph and ask whether it could be lifted verbatim into an AI answer and be useful to the reader without any surrounding context. If the answer is yes, it is citable. If it requires reading the previous section to make sense, it needs another sentence of grounding context added to the front. That single sentence — often just restating the section topic as a direct answer — is the difference between being cited and being skipped.

Practical AEO implementation for operators

For a marketing agency, coaching practice, DTC brand, or offer owner running a content program, the AEO implementation that moves citation numbers in the next 60 days has four steps. These are ordered by time-to-result, not alphabetically. The first two steps — schema validation and PAA FAQ alignment — can be completed on a published post in under two hours and typically show measurable citation pickup improvement within 2–4 weeks. The last two steps — citable cluster auditing and freshness maintenance — are ongoing habits rather than one-time fixes. All four are self-serviceable without agency support for operators who have an in-house content editor and access to a PAA scraper.

The full AEO stack for a content-heavy site (programmatic schema, automated PAA monitoring, topical cluster architecture, third-party listing presence) is the AEO/GEO service we run as an engagement. But steps 1–4 above are self-serviceable for operators with an in-house content team. The only dependency is access to DataForSEO or a PAA scraper for step 2. Everything else requires only a text editor and the Rich Results Test. The distinction worth making: if you have fewer than 20 published knowledge posts, do the four steps manually on each one. If you have 50+ posts, build the PAA alignment and schema audit into a recurring workflow — the manual approach does not scale past a certain cluster size, and the opportunity cost of doing post 47 by hand is high when you could be using that time to build the third-party presence that drives the authority axis of citation pickup.

How AEO connects to GEO and the broader AI search cluster

AEO is one of three overlapping optimization surfaces in AI search. Generative Engine Optimization (GEO) focuses specifically on getting content included in the training data and retrieval corpus of large language models — a longer-cycle effort with higher ceiling but lower direct controllability. AEO vs SEO covers the tactical divergences in more detail if you are running a combined program. How to get cited by AI — the GEO/SEO guide covers the implementation for getting citations from ChatGPT, Perplexity, and Claude specifically.

The practical relationship: SEO builds the foundation (crawlable, accurate, structured content). AEO extracts the most leverage from that foundation for AI search. GEO extends that leverage into the training corpus where retrieval-augmented generation draws from. Running all three surfaces off the same content workflow is the architecture — not three separate campaigns.